Video-mediated communication may be able to benefit from a number of novel technologies, but designing for a good experience requires considering purpose, visibility, and intersubjectivity for both partners.

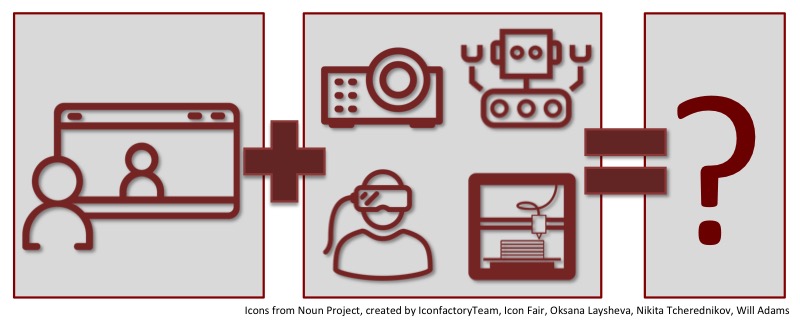

Skype, Google Hangouts, Facetime, and ShareTable are all examples of real-time video-mediated communication technologies. Designing, implementing, and deploying novel systems of this sort is a big research priority for me and every semester I get a few entrepreneuring students approaching me with ideas for cool new technology to try in this space: robots, virtual reality, augmented reality, projector-camera systems, and more. Frequently, I ask them to consider a few things first and if you’re new to thinking about computer-mediated communication, these may be helpful for you as well (many of these ideas come from my work with play over videochat).

In this case, let’s assume the “base case” of two people—Alice and Bob—using a potential new technology to communicate with each other (though the questions below can definitely be expanded to consider multi-user interfaces). Consider:

- (Purpose) Why is Alice using this technology? Why is Bob? The answer should be specific (e.g., not just “to communicate,” but “to plan a surprise party for Eve together”) and may be different for the two parties. It’s good to come up with at least three such use cases for the next questions.

- (Visibility) What does Alice see using this technology? What does Bob see? Consider how Alice is represented in Bob’s space, how Alice can control her view (and then flip it and consider the same things for Bob). Consider if this appropriate for their purposes. For example, maybe Alice is wearing VR goggles and controlling a robot moving through Bob’s room. It’s cool that she can see 360 degree views and control her gaze direction, but what does Bob see? Does he see a robot with a screen that shows Alice’s face encased in VR goggles? Does this achieve level of visibility that is appropriate for their purpose?

- (Intersubjectivity) How does Alice/Bob show something the other person? How does Alice/Bob understand what the other person is seeing? The first important case to consider is how Alice/Bob bring attention to themselves and how they know if their partner is actually paying attention to them. If Alice is being projected onto a wall but the camera for the system is on a robot, it will likely be difficult for her to know when Bob is looking at her (i.e., when he’s looking at the wall display it will seem that he’s looking away from the camera). It’s also useful to consider the ability to refer to other objects. Using current videochat this is actually quite hard! If Alice points towards her screen to a book on the shelf behind Bob, Bob would have no idea where she’s pointing (other than generally behind him). Solving this is hard—it’s definitely an open problem in the field—but the technology should at least address it well enough to support the scenarios posed in question 1.

Generally, I find that new idea pitches tend to propose inventions that provide a reasonable experience for Alice but a poor one for Bob. It is important to consider purpose, visibility, and intersubjectivity experience for both of them in order to conceive a system that is actually compelling.

Also published on Medium.